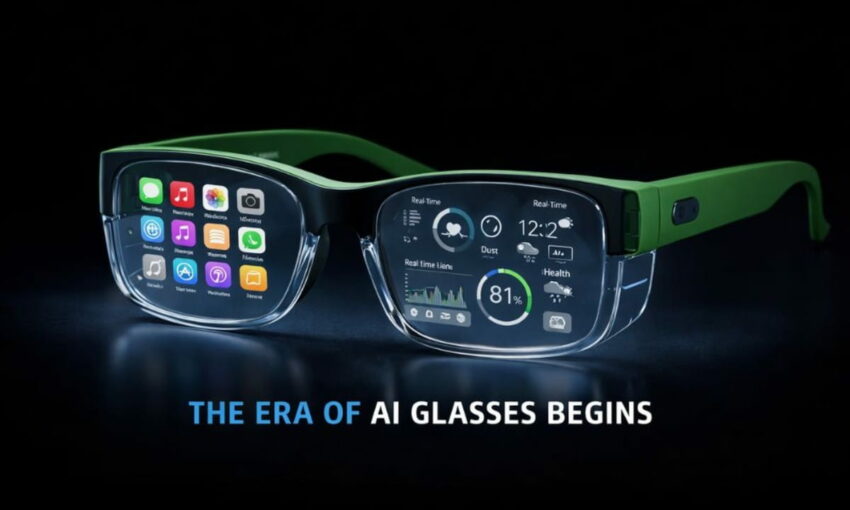

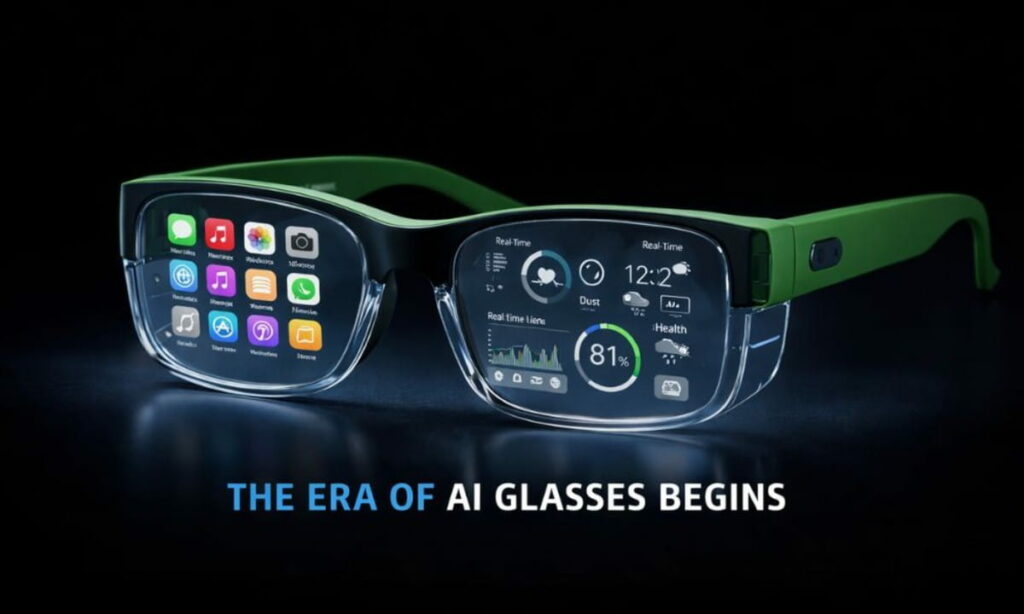

The smartphone era might just be over. Two of the world’s most powerful tech companies have their sights set on the same prize — and it sits on the bridge of your nose. Apple and Meta are both pouring resources into AI glasses. Each company believes eyewear will become the dominant computing platform of the future. But how they plan to get there tells two different stories.

Meta already has skin in the game

Meta did not wait for the market to mature. Its Ray-Ban smart glasses are already on store shelves. Users can snap photos, stream live video, and issue voice commands without touching a phone. The glasses also plug directly into Instagram and Facebook, feeding content into Meta’s broader social ecosystem.

CEO Mark Zuckerberg has left no room for interpretation about where this is heading.

He said, “It’s hard to imagine a world… where most glasses… aren’t AI glasses.”

He went further, suggesting, “People who don’t wear smart glasses… may be at a cognitive disadvantage.”

That kind of language signals urgency. Meta frames AI glasses not as a luxury or novelty, but as a competitive necessity for everyday life.

Chief Product Officer Chris Cox reinforced the company’s position bluntly, saying, “Smart glasses are the future of computing devices.”

Meta’s model relies on cloud-based AI. That means faster updates and more processing muscle, though it also means your data travels off-device. Speed and scale drive the strategy here, not caution.

Apple AI glasses have a strong competitor already

Apple, by contrast, has stayed quiet publicly. No product announcement, no release date yet!

But internal signals suggest the company treats this as its most critical project in years.

Reports from inside Apple paint a vivid picture of executive priority.

One source described the internal focus bluntly: “Tim cares about nothing else.” Another added, “It’s the only thing he’s really spending his time on.”

Those remarks point to how seriously Apple views this moment. The company wants AI glasses that look like regular eyewear, with slim hardware and minimal computing. That approach keeps the device light without sacrificing performance.

Apple also bets hard on on-device AI processing. Rather than routing data through the cloud, Apple wants to handle intelligence locally. That protects user privacy and limits exposure. CEO Tim Cook has already highlighted where this thinking leads.

He pointed to a feature gaining traction among users, saying, “One of our most popular features is Visual Intelligence.”

That capability blends real-world awareness with AI processing — a preview of how Apple plans to fuse the physical and digital worlds through wearables.

Two strategies, one finish line

The contrast between the two is not just about timing. It reflects fundamentally different philosophies about how technology should enter daily life.

Apple builds for precision. It observes, refines, and launches when the experience feels seamless. Its glasses will likely pass as ordinary eyewear. The goal is to make the technology disappear into the background.

Meta builds in public. It pushes products out, collects real-world feedback, and iterates fast. Its current glasses come with visible cameras and hardware. Functionality leads, and aesthetics follow.

Their business models diverge just as sharply. Apple targets premium buyers who are already inside its ecosystem. Device sales and ecosystem loyalty generate revenue. Meta targets mass adoption. Lower price points drive more users to its platforms, where advertising revenue follows engagement.

Developers hold serious leverage

Neither platform succeeds without a strong developer ecosystem. Apple maintains tight control over its app environment. Quality stays high, but experimentation moves slowly. Meta opens its platform more broadly. Developers can build and test new AI-driven applications much faster.

However, Apple’s existing developer community carries enormous weight. If the company releases the right tools for glasses, adoption could accelerate quickly. Developers tend to follow users, and Apple has hundreds of millions of loyal customers waiting.

The bigger picture

The push into AI glasses reflects something larger than product competition. Smartphones have matured. Annual growth has flattened. The industry needs a new platform cycle, and artificial intelligence is the engine driving the next one.

Glasses powered by AI can process context, respond to voice, and understand what the user sees. Eventually, users may not need to pull out a phone at all. They speak, glance, or gesture instead.

For now, smart glasses complement the phone. Over time, they may replace it entirely.

Google is also watching the space closely. Competition will intensify as more players enter the race.

The consumer decides

Apple will likely arrive later with a more polished product. Meta will continue to ship, learn, and improve in real time. Both approaches have merit. Both carry risks.

But ultimately, consumers will cast the deciding vote. One platform prioritizes privacy and refinement. The other prioritizes reach and power. The question is: which trade-off do people accept?

Would you choose Apple’s privacy-first approach or Meta’s feature-rich AI platform? Please drop your views about the future of AI glasses in the comments below.