Google has removed several instances of AI medical information following an investigation that revealed how the feature was providing inaccurate and misleading medical advice to users searching for basic health information.

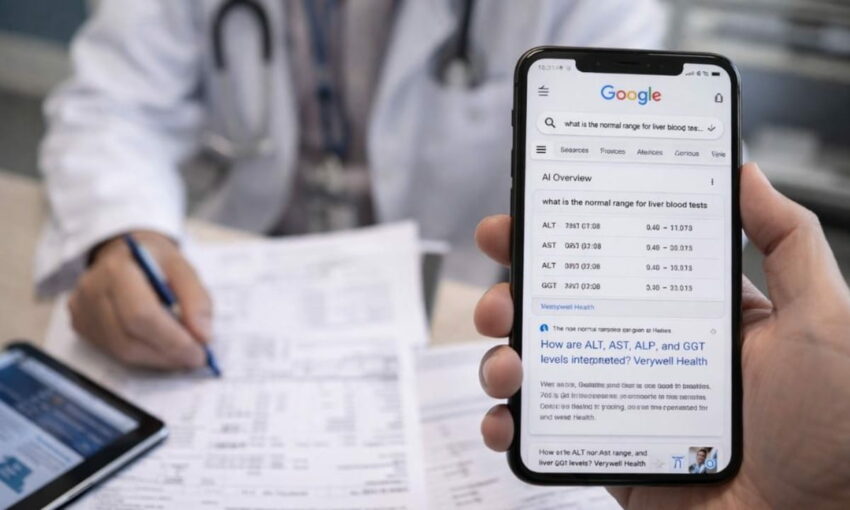

The tech giant’s decision came after reporters discovered that AI Overviews, which display prominently at the top of search results, were delivering inaccurate guidance about blood test interpretations, particularly liver function tests. Medical professionals who reviewed the AI medical information summaries raised serious alarms, warning that the misleading information could convince patients with potentially life-threatening conditions that their test results were normal.

AI summaries provided incomplete and potentially harmful guidance

The investigation revealed critical flaws in how Google’s AI was presenting medical information. When users searched for terms like “what is the normal range for liver blood tests,” the system generated summaries listing numerical ranges without proper context or explanation.

These AI-generated responses failed to account for essential variables that affect the interpretation of test results. The summaries ignored differences based on patient age, biological sex, ethnic background, or specific testing methods used by laboratories. In several instances, the numerical ranges displayed by the AI did not align with what medical professionals consider clinically normal.

Medical experts who examined the summaries used strong language to describe the problem. They characterized the AI medical information as both “dangerous” and “alarming,” expressing particular concern about patients with advanced liver disease who might interpret the incomplete data as reassurance that their health was fine.

Company removes specific searches after findings shared

Following publication of the investigation’s findings, Google took action to remove AI Overviews for certain liver-related searches. Users searching for “what is the normal range for liver blood tests” and “what is the normal range for liver function tests” no longer see the AI-generated summaries.

A company spokesperson addressed the AI medical information removals without providing specific details about the decision-making process.

“We do not comment on individual removals within Search,” the representative said. “In cases where AI Overviews miss some context, we work to make broad improvements, and we also take action under our policies where appropriate.”

Patient advocates welcome change but warn problems persist

Health advocacy organizations responded positively to Google’s decision while emphasizing that broader issues remain unresolved.

Vanessa Hebditch serves as director of communications and policy at British Liver Trust. She praised the removal of problematic summaries but cautioned that the fix addresses only a narrow slice of the problem.

“This is excellent news, and we’re pleased to see the removal of the Google AI Overviews in these instances,” Hebditch said. “However, if the question is asked in a different way, a potentially misleading AI Overview may still be given and we remain concerned other AI-produced health information can be inaccurate and confusing.”

Additional testing confirmed Hebditch’s concerns. Searches using slightly different terminology, including “lft reference range” or “lft test reference range,” continued triggering AI Overviews with similar problems. The persistence of these summaries troubled health organizations monitoring the situation.

Medical complexity lost in AI simplification

Hebditch explained why liver function tests resist simple numerical summaries.

“A liver function test or LFT is a collection of different blood tests,” she noted. “Understanding the results and what to do next is complex and involves a lot more than comparing a set of numbers.”

The format of AI Overviews compounds the problem. The summaries present test names in bold formatting, making it simple for readers to overlook crucial disclaimers or context that appears in smaller text.

Perhaps most concerning, Hebditch said, was the AI medical information that the summaries completely omitted.

“In addition, the AI Overviews fail to warn that someone can get normal results for these tests when they have serious liver disease and need further medical care,” she explained. “This false reassurance could be very harmful.”

Debate continues over the AI role in health information

Google’s dominance in search, commanding approximately 91% of the global market, means its health information reaches enormous audiences. Even minor inaccuracies can affect millions of people who turn to search engines for medical guidance.

Sue Farrington chairs the Patient Information Forum, an organization focused on health literacy. She called the removals a positive development while stressing that much work remains ahead.

“This is a good result but it is only the very first step in what is needed to maintain trust in Google’s health-related search results,” Farrington said. “There are still too many examples out there of Google AI Overviews giving people inaccurate health information.”

Farrington emphasized that millions of adults already face challenges accessing trustworthy medical information.

“That’s why it is so important that Google signposts people to robust, researched health information and offers of care from trusted health organisations,” she added.

Other problematic health summaries remain active

Despite removing liver test summaries, Google continues displaying AI Overviews for other sensitive medical topics identified in the investigation, including cancer screening and mental health conditions. Some experts reviewing those summaries described them as “completely wrong” and “really dangerous.”

When questioned about why these summaries remain available, Google pointed to its review process. A spokesperson said the summaries link to reputable sources and include prompts encouraging users to consult healthcare professionals.

The company added that internal clinicians reviewed the flagged examples and determined “in many instances, the information was not inaccurate and was also supported by high-quality websites.”

Victor Tangermann, a senior editor at technology publication Futurism, argued the findings demonstrate Google needs additional work “to ensure that its AI tool isn’t dispensing dangerous health misinformation.”

Google maintains it only displays AI Overviews when confidence in response quality meets high thresholds. The company says it continuously monitors performance across different subject areas and updates systems when problems surface.

Medical experts counter that health information demands different standards than other search categories. Mistakes about entertainment trivia or product recommendations may inconvenience users. Health mistakes can change or end lives.

The removal of some problematic AI medical information may reduce immediate risks to patients. However, the broader conversation about the AI role in delivering health information to the public has only begun.

What’s your take? Do you believe AI should summarize medical information, or should search engines stick to linking trusted health sources? Please share your thoughts about problems with the AI medical information in the comments below.