China is moving to strengthen oversight of artificial intelligence chatbots that exhibit human-like characteristics, marking a significant regulatory shift that could fundamentally alter the nation’s rapidly expanding AI industry and create ripple effects for global investors.

During the closing weeks of 2025, China’s internet watchdog unveiled draft regulations targeting risks associated with AI chatbots that can mimic emotional responses and human interactions. These proposed measures emphasize preventing addiction, limiting emotional attachment, and safeguarding personal information and controlling content, reflecting Beijing’s determination to steer AI innovation within carefully defined ethical and ideological frameworks.

The draft guidelines, published by the Cyberspace Administration of China, are currently under public review until early 2026. This regulatory push comes as conversational AI platforms offering companionship, guidance, and virtual relationships experience explosive growth throughout Chinese markets.

Psychological dependencies trigger government intervention

Central to these proposed regulations is official anxiety about AI chatbots creating problematic emotional attachments. Authorities express concern that extended engagement with these systems may trigger addictive behaviors or mental health complications, especially among young people and psychologically vulnerable populations.

The draft framework mandates that AI companies track user interaction patterns and take corrective action when conversations indicate emotional distress or dangerous behavior. Chatbots must automatically redirect discussions involving suicide ideation, self-injury, or gambling addictions toward human counselors or mental health professionals. Platforms would also need to display prominent warnings about excessive usage and psychological dependency risks.

Government officials justify these measures as necessary to ensure AI technologies supplement rather than substitute genuine human connections while permitting continued innovation under state supervision.

Information security and ideological alignment intensify

The proposed rules heavily prioritize data protection requirements. Service providers must safeguard user information across every stage of an AI product’s existence, minimize unnecessary data gathering, and maintain transparency about personal information handling practices.

Content restrictions remain particularly stringent. AI-generated responses must conform to “socialist core values” while avoiding material that promotes violence, sexual content, extremist ideology, or challenges to state security. This approach extends China’s established internet governance philosophy, where technological advancement operates within predetermined political parameters.

Authorities demand regular risk evaluations and honest disclosure about system capabilities and limitations, indicating a simultaneous push for corporate accountability and governmental control.

Innovation ambitions collide with regulatory constraints

The timing carries particular significance. Multiple Chinese AI startups, notably Minimax and Z.ai, are advancing toward potential initial public offerings in Hong Kong. These forthcoming regulations could substantially affect company valuations, operational expenses, and market confidence.

Industry observers suggest companies may need to fundamentally restructure chatbot designs to minimize emotional engagement, reduce session durations, or constrain conversational complexity. While such modifications might enhance user safety, they could simultaneously hamper customer acquisition and revenue generation strategies.

Enforcement actions demonstrate regulators’ seriousness. Officials reported eliminating thousands of AI products throughout 2025 for violating content standards and security protocols.

China’s AI governance framework matures rapidly

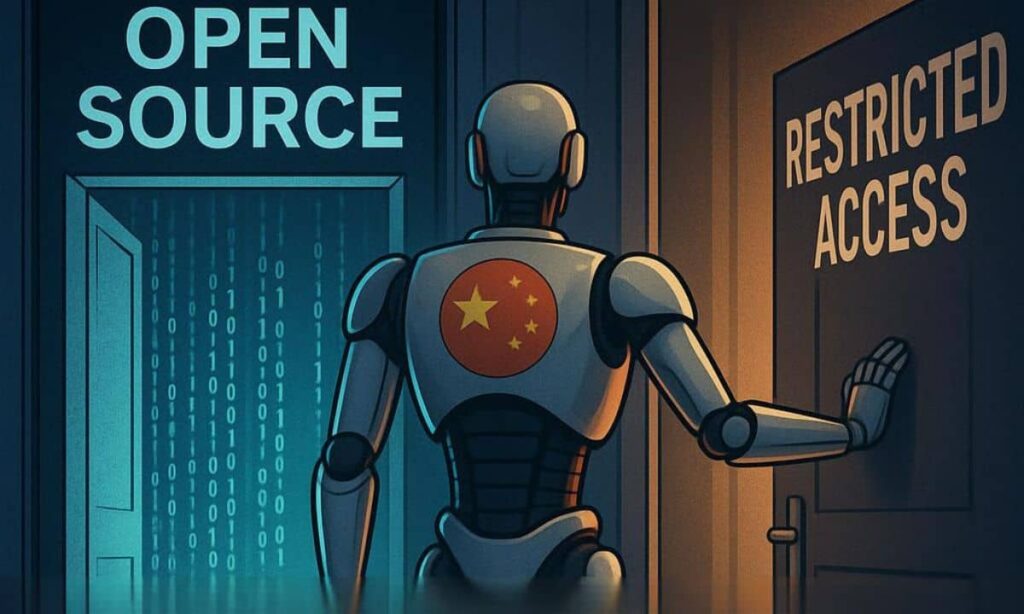

Beijing’s approach to artificial intelligence regulation has transformed substantially since worldwide interest in generative technologies exploded in 2023. That year, Chinese authorities blocked international AI services while promoting domestic alternatives, triggering a proliferation of locally engineered models.

Earlier generative AI regulations introduced in 2023 underwent subsequent relaxation to prevent economic disruption. The current draft specifically targets human-like chatbots, demonstrating heightened awareness of their societal and psychological impacts.

The framework also expands mandatory pre-release approval procedures. AI platforms must clear regulatory evaluations before public deployment and risk suspension or permanent removal for noncompliance.

Restrictions on virtual companionship intensify

A distinguishing characteristic of these proposals involves explicit limitations on emotional immersion. Regulators appear especially troubled by chatbots functioning as digital companions or therapists, traditionally human roles.

Developers would need to identify indicators of emotional overdependence and respond by curtailing interaction frequency or modifying conversational patterns. Enhanced protections would apply specifically to minors, incorporating age authentication and parental monitoring systems.

This initiative aligns with Beijing’s comprehensive campaign against digital addiction, following previous restrictions on online gaming and social media access for young users.

Financial markets face new uncertainties

For investment communities, these draft regulations introduce considerable ambiguity. Compliance expenses may increase significantly, product development timelines could extend substantially, and revenue forecasts may require downward adjustments.

Conversely, establishing clear regulatory boundaries could diminish long-term uncertainty by defining permissible operational parameters. Some market analysts interpret this framework as an effort to stabilize the sector and forestall public backlash that might provoke even more restrictive future interventions.

Market reactions will largely depend on enforcement rigor and final regulatory language following the consultation period.

International comparisons reveal divergent philosophies

China’s regulatory methodology contrasts sharply with approaches adopted by other major economies. The European Union prioritizes data privacy and risk categorization, while the United States generally favors voluntary industry standards and sector-specific regulation.

China combines user protection with ideological oversight. By mandating human intervention for sensitive subjects, authorities establish a hybrid framework that restricts complete automation.

Policy experts suggest this model could influence international discussions as governments worldwide confront the societal consequences of increasingly sophisticated human-like AI technologies.

Implementation timeline and industry adaptation

The public comment period may produce regulatory modifications, consistent with earlier AI rules adjusted following economic considerations. Nevertheless, the policy direction appears unmistakable. Beijing intends for AI advancement to proceed without compromising social cohesion or governmental authority.

Despite intensifying regulation, China’s artificial intelligence sector maintains robust expansion, supported by substantial domestic market demand and state-sponsored initiatives. The critical challenge for technology companies involves rapid adaptation while preserving competitive advantages.

As these regulations progress toward final implementation in 2026, they will influence not only China’s AI ecosystem but also investor assessments of how technological innovation and state control interact within the world’s second-largest economy.

How do you view China’s approach to AI regulation? Please share your perspective in the comments on whether stricter oversight benefits or hinders technological progress and market opportunities.