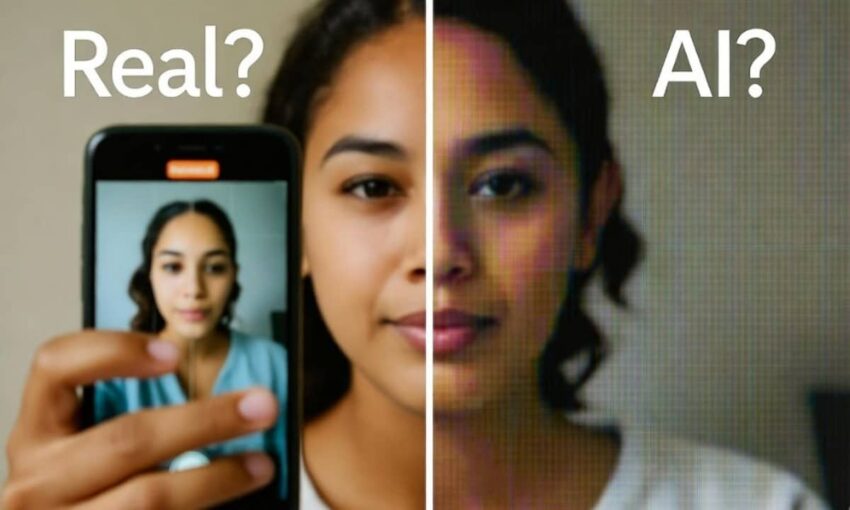

Artificial intelligence video technology is fundamentally altering how audiences perceive online content. Brief, low-resolution clips now circulate faster than fact-checkers can verify them. Viewers, media professionals, and academic researchers are falling victim to sophisticated deception. The outcome marks a watershed moment where video evidence loses its traditional credibility. AI videos have become so convincing that millions of people now share fabricated footage daily without realizing they’ve been deceived.

AI video generators advance at an alarming speed

Over the previous six months, AI-powered video creation platforms have advanced dramatically. These systems produce footage that resembles smartphone recordings, vehicle cameras, or dated security surveillance footage. They replicate the visual language of TikTok, Instagram Reels, and YouTube Shorts. The boundary separating authentic from manufactured content has become razor-thin. Countless users scroll past fabricated material without recognizing the deception.

AI videos exploit human psychology by mimicking the imperfect aesthetics people associate with authentic smartphone footage and eyewitness recordings.

Poor quality serves as a primary warning signal

“If you see a video with bad picture quality – think grainy, blurry footage – alarm bells should go off,” states Hany Farid, computer science professor at the University of California, Berkeley, and founder of deepfake detection company GetReal Security. “It’s one of the first things we look at.”

Degraded footage conceals telltale imperfections. Modern AI systems no longer produce obvious mistakes, such as additional digits or garbled typography. Current errors appear in minute details. Facial textures appear unnaturally flawless. Hair exhibits unusual light patterns. Environmental elements demonstrate subtle inconsistencies. Items demonstrate movement that feels nearly correct, yet contains imperceptible flaws. Digital compression and reduced resolution make these indicators increasingly difficult to identify.

AI videos have crossed a dangerous threshold where distinguishing real footage from synthetic content has become nearly impossible for the average viewer.

Deliberate degradation hides AI fingerprints

“If I’m trying to fool people, what do I do? I generate my fake video, then I reduce the resolution… And then I add compression that further obfuscates any possible artefacts,” Farid explains. “It’s a common technique.”

Duration provides another significant indicator. AI videos typically run extremely briefly. Six to ten seconds represents standard length. Extended clips frequently combine multiple generated segments. Viewers may observe transitions occurring every few seconds. This rhythm serves as a warning signal.

Experts urge caution against assumptions

However, specialists caution against hasty judgments. Reduced quality independently doesn’t confirm fabrication.

“If you see something that’s really low quality that doesn’t mean it’s fake. It doesn’t mean anything nefarious,” explains Matthew Stamm, director of the Multimedia and Information Security Lab at Drexel University.

Nevertheless, the trend proves unmistakable. Widely shared fabrications frequently appear captured using inferior equipment. Footage showing rabbits jumping on trampolines. A romantic encounter aboard public transportation. A religious leader delivering passionate commentary about wealthy elites. Each has accumulated millions of engagements. Each proved to be AI-generated material.

Consumer apps bring synthetic video to masses

The situation intensified with the emergence of consumer applications. OpenAI’s Sora brought text-to-video generation to mainstream audiences. Users input written descriptions. The platform produces realistic footage within seconds. The application climbed download rankings rapidly. It simultaneously triggered an avalanche of synthetic material mimicking genuine recordings.

“Our usage policies prohibit misleading others through impersonation, scams, or fraud, and we take action when we detect misuse,” the company states.

However, written policies cannot prevent large-scale abuse. Digital watermarks can be removed. Technical metadata can be eliminated. Content transfers between platforms within minutes. What begins as harmless entertainment can be repurposed as misinformation, financial schemes, or character assassination.

Safeguards prove inadequate at scale

News organizations face significant challenges. A recent incident demonstrated how quickly AI content infiltrates reporting. A published story referencing social media videos required correction after audience members identified AI-generated footage. The segment was revised to acknowledge the synthetic nature. An editorial note documented the modification. The episode exposed a difficult reality. Editorial processes designed for written content and photography remain unprepared for AI video challenges.

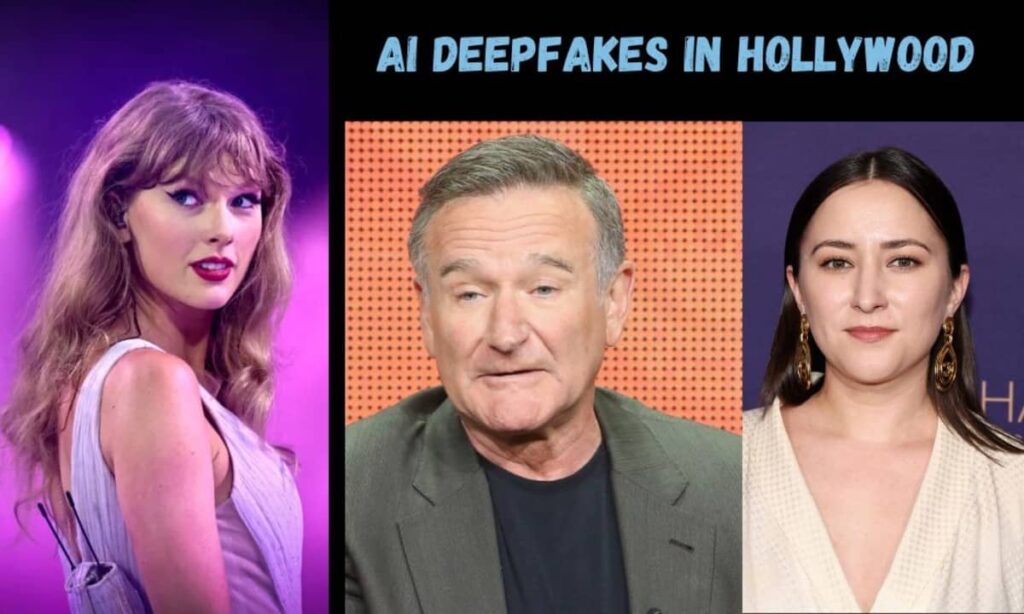

Public figures face a double-edged sword

Public personalities recognize both threats and advantages. One observation captured prevailing sentiment.

“If something happens really bad, just blame AI,” the remark noted. “But also they create things. It works both ways.”

This reasoning creates dual problems. It provides an excuse to dismiss authentic footage. It simultaneously enables falsehoods masquerading as legitimate news coverage.

AI videos now represent the fastest-growing category of misinformation content, outpacing manipulated photos and deepfake audio in both volume and viral reach.

Scientists are developing countermeasures urgently. They’re creating forensic technologies that analyze beyond surface appearance.

“When you generate or modify a video, it leaves behind little statistical traces that our eyes can’t see, like fingerprints at a crime scene,” Stamm notes.

These approaches examine digital noise characteristics, movement patterns, and file encoding indicators. They can identify irregularities invisible to human observers. Yet they’re not infallible. They frequently require uncompressed original files, not platform-modified versions.

Provenance systems offer a long-term solution

Concurrent efforts focus on establishing content authenticity. Camera manufacturers are testing systems that record capture information during filming. AI platforms could embed distinctive markers when producing content. The objective establishes verifiable documentation for digital media. If widely implemented, it would assist audiences and editors in confirming origins. It wouldn’t eliminate all problems. It would establish higher standards.

Public understanding must expand simultaneously. Specialists argue that surface-level clues will diminish as technology improves. The more reliable approach involves questioning video sources, identifying uploaders, and determining whether credible outlets have authenticated material. This transformation parallels how people evaluate written internet content. Origin matters more than appearance.

Social platforms bear responsibility too. They can restrict the distribution of unverified or suspicious content. They can prioritize authenticated sources. They can flag potentially synthetic material during review processes. These represent policy choices. They involve compromises. But they prove critical when AI-generated content spreads instantaneously.

Currently, remember three essential guidelines. Verify quality. Verify duration. Verify source. If footage appears brief, degraded, and sensational, assume nothing. Stop scrolling. Search for confirmation. Consult established news outlets. Treat AI-generated videos like unsubstantiated claims.

The challenge will intensify

Society will encounter more synthetic footage. The technology will advance. The imperfections will diminish. News organizations can establish AI-focused verification departments. Educational institutions can teach source verification over visual appeal. Platforms can structure systems rewarding accuracy. Lawmakers can encourage standardized authentication signatures and disclosure requirements.

The guidance matches current circumstances and the article’s message. Stay alert. AI-generated videos are deceiving audiences worldwide.

AI videos will continue proliferating across every platform, but coordinated action from technology companies, educators, and policymakers can help audiences develop the critical skills needed to identify synthetic content before sharing it.

What measures do you believe would most effectively combat synthetic video misinformation? Should platforms implement mandatory verification systems, or does responsibility rest primarily with viewers and educators? Share your perspective and experiences with AI-generated content in the comments below.