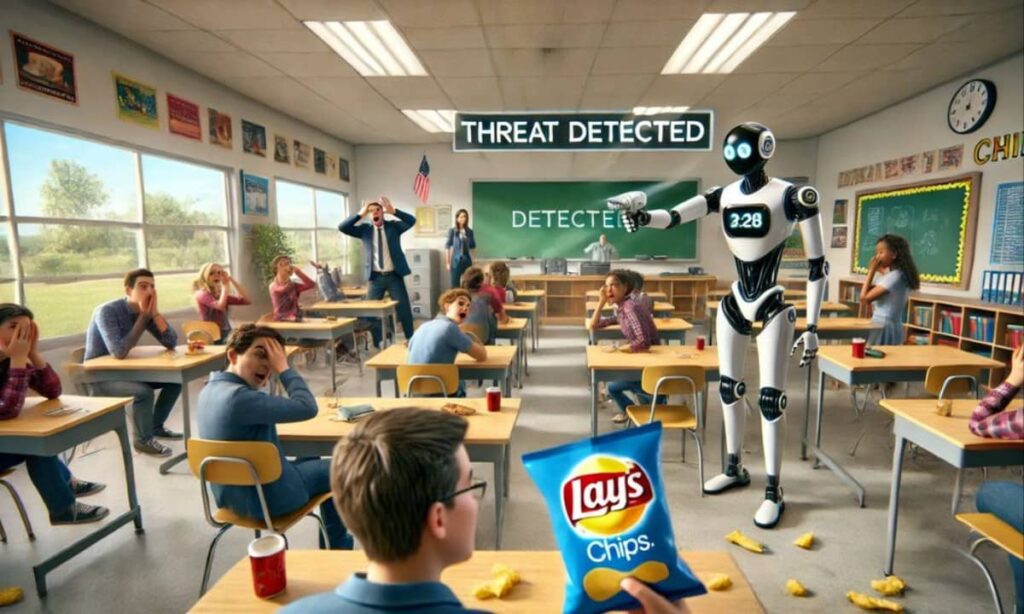

In a startling incident highlighting the risk of AI error, a 16-year-old Baltimore student was briefly handcuffed by police after an artificial intelligence system incorrectly identified a bag of chips in his pocket as a firearm, raising concerns about the reliability of AI technology in sensitive settings.

Taki Allen, the student involved, recounted the harrowing experience to WMAR-2 News.

“Police showed up, like eight cop cars, and then they all came out with guns pointed at me, talking about getting on the ground,” he said.

Allen had just completed football practice and placed a Doritos bag in his pocket when officers arrived with weapons drawn twenty minutes later.

“He told me to get on my knees, arrested me, and put me in cuffs,” Allen added.

Police and the school respond to an AI error

The Baltimore County Police Department acknowledged that Allen was restrained but not arrested, stating that “the incident was safely resolved after it was determined there was no threat.”

Police acted on information provided by the school, where an AI system had flagged a possible firearm. Although human moderators reviewed the alert and found no danger, school administrators failed to notice the update and escalated the matter to local police.

In a letter to parents, Principal Kate Smith explained that the school’s resource officer called for backup “out of an abundance of caution.”

Officers searched Allen and quickly confirmed he did not possess a weapon. Despite the clarification, the incident has sparked concerns about the use of AI-powered detection in schools. Local councilman Izzy Pakota called on Baltimore County Public Schools to reevaluate their procedures surrounding AI-powered weapon detection systems.

AI gun detection system under scrutiny

Omnilert, the company that provided the AI system used at the school, described the incident as an example of the tool functioning as intended, with human reviewers confirming there was no weapon within seconds of the initial detection.

However, the company acknowledged the challenge of accurate recognition on its website, stating, “Real-world gun detection is messy.”

Allen expressed skepticism about the technology after AI error resulted in mayhem in his life, saying, “I don’t think no chip bag should be mistaken for a gun at all.”

He waits inside after practice, feeling unsafe outside with snacks or drinks in his hand. Who knows, another AI error could jeopardize his life?

The reliability of AI in detecting weapons has been scrutinized in the past. Last year, Evolv Technology, a US security company, was barred from making unsupported claims after overstating the capabilities of its scanners, which were installed in schools and stadiums nationwide, despite the scanners failing to detect certain weapons.

Federal judges caught in AI errors, sparking calls for oversight

Concerns about AI extend beyond schools, as two federal judges have admitted that court staff used AI tools to prepare rulings that were later withdrawn due to errors. U.S. District Judge Julien Xavier Neals of New Jersey and U.S. District Judge Henry Wingate of Mississippi acknowledged the mistakes in letters to Sen. Chuck Grassley, who chairs the Senate Judiciary Committee.

Grassley described the rulings as “error-ridden” and demanded answers after lawyers flagged serious inaccuracies.

Neals revealed that a law school intern used ChatGPT to conduct unauthorized legal research, resulting in a flawed draft order in June. Wingate admitted that a law clerk used Perplexity AI to draft part of a July ruling in a civil rights case, which contained errors and had to be replaced. Both judges have since implemented stronger review processes and bans on AI use in their chambers.

Grassley praised the judges for coming forward but emphasized the need for broader action, stating that the judiciary must establish clear, permanent policies to prevent “laziness, apathy, or overreliance on artificial assistance” from undermining trust in the courts. Lawyers across the country have also faced reprimands for submitting AI-generated filings with fake citations or factual mistakes, with some judges imposing fines to deter future misuse.

Broader debate over AI trust and the need for stricter oversight

The stories of a teenager misidentified as armed and federal judges forced to retract rulings highlight the risks of deploying AI in sensitive areas. From schools to courtrooms, mistakes can have serious consequences, fueling calls for stricter oversight, clearer policies, and more human responsibility wherever AI is used.

Advocates argue that AI technology can improve safety and efficiency, while critics warn that it can spread errors quickly and damage public trust. For Allen and his family, the incident serves as a personal reminder of the potential dangers of AI misidentification. For the judiciary, the mistakes expose how even limited use of AI tools can undermine credibility.

As AI continues to evolve and expand into various domains, it is crucial to address these concerns and establish robust safeguards to ensure that the technology is used responsibly and ethically. The debate over AI trust and the need for stricter oversight is more important than ever, as the consequences of AI errors become increasingly apparent in our daily lives.

What are your thoughts on the use of AI in sensitive areas like schools and courtrooms? Share your views about the risk of AI errors below.