An international movement demanding a temporary stop to AI superintelligence gained considerable momentum this Wednesday. Hundreds of influential voices — spanning Nobel Prize winners to members of the British royal family — attached their names to a declaration cautioning against uncontrolled AI advancement. The timing aligned with mounting worries about false information spreading through existing AI platforms and a remarkable British television demonstration featuring a synthetic news presenter.

International petition seeks development freeze

A collaborative declaration issued through the Future of Life Institute demands “a prohibition on the development of superintelligence” pending two conditions: achieving “broad scientific consensus that it will be done safely and controllably” alongside “strong public buy-in.”

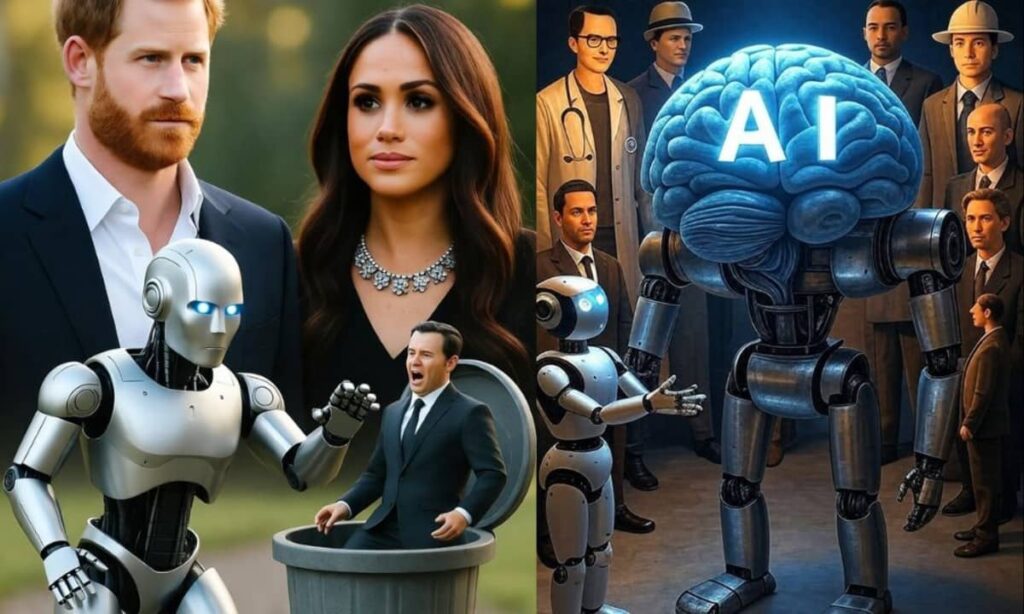

The manifesto attracted support from over 800 prominent individuals. Signatories include Prince Harry alongside Meghan, Duchess of Sussex, artificial intelligence trailblazer Geoffrey Hinton, retired U.S. Joint Chiefs of Staff Chairman Mike Mullen, Virgin Group founder Richard Branson, musician Will.i.am, and Steve Bannon, who previously advised the Trump administration.

Executive director Anthony Aguirre, who serves as a physicist at the University of California, Santa Cruz, argues that AI progress has outpaced public awareness and input.

“We’ve, at some level, had this path chosen for us by the AI companies and founders and the economic system that’s driving them, but no one’s really asked almost anybody else, ‘Is this what we want?’,” he explained to journalists.

The organization launched in 2015 with initial backing from Elon Musk. Today, Ethereum creator Vitalik Buterin ranks among its principal supporters. The institute emphasizes its policy against accepting financial contributions from companies pursuing artificial general intelligence development.

Aguirre highlighted the necessity for worldwide cooperation agreements. He drew parallels between AI oversight and existing treaties governing nuclear weapons and biological warfare.

“This is not what the public wants. They don’t want to be in a race for this,” he stated.

Technology giants maintain aggressive timelines

While voices demanding caution grow louder, leading tech corporations show no signs of slowing down. Meta’s CEO Mark Zuckerberg declared in July that superintelligence had come “now in sight.” OpenAI chief executive Sam Altman forecasted its potential arrival ahead of 2030.

Meanwhile, Elon Musk — whose xAI venture competes in this space — issued an earlier warning that digital superintelligence “is happening in real time.”

Many companies, including OpenAI, Google, and Microsoft, continue pouring billions into advanced machine learning models and computing infrastructure. Numerous researchers anticipate that artificial general intelligence — systems matching human intellectual capabilities — may rapidly evolve into superintelligence, where machines eclipse human cognitive abilities entirely.

Notably absent from the institute’s petition were signatures from any of these technology leaders. The White House declined to issue a statement. Public opinion surveys reveal Americans remain split almost evenly: 44% anticipate AI will enhance their lives, whereas 42% fear AI superintelligence, worrying it will make things worse.

Current systems show troubling weaknesses

Fresh evidence highlighting problems with today’s AI technologies emerged from collaborative research conducted by the European Broadcasting Union and the BBC. Their examination of over 2,700 outputs from prominent models — ChatGPT, Google’s Gemini, Microsoft’s Copilot, and Perplexity — revealed that 45% contained serious deficiencies.

Flawed sourcing represented the predominant issue, affecting nearly one-third of all responses analyzed. Some instances showed AI platforms delivering information without proper source support or incorrectly attributing statements. Factual accuracy problems appeared in 20% of answers. Another 14% demonstrated inadequate contextual framing.

The report documented specific failures. ChatGPT incorrectly identified Pope Francis as the serving pontiff several months following his death. Perplexity wrongly asserted that surrogacy remains illegal in Czechia. Gemini posted the worst performance, with 76% of its outputs containing sourcing defects.

Jean Philip De Tender from the EBU and Pete Archer representing the BBC jointly authored the report’s introduction. They pressed companies to make fixing these flaws a priority.

“They have not prioritised this issue and must do so now,” they emphasized. “They also need to be transparent by regularly publishing their results by language and market.”

British broadcaster’s synthetic anchor sparks debate

Public skepticism intensified this week following a Channel 4 revelation in the United Kingdom. The network disclosed that the host presenting its documentary “Will AI Take My Job?” wasn’t human but an AI-constructed virtual presenter.

The program investigated workplace automation trends before concluding with the digital anchor’s disclosure: “I don’t exist, I wasn’t on location reporting this story. My image and voice were generated using AI.”

Producer Seraphinne Vallora created the experiment for Kalel Productions. The broadcast marked the first British television show fronted by an artificial intelligence presenter. It aimed to evaluate how effectively machines can replicate credible journalists.

Louisa Compton, who oversees news and current affairs at Channel 4, clarified that the demonstration wasn’t intended as a permanent strategy. Instead, it served as a cautionary illustration of “how disruptive AI has the potential to be — and how easy it is to hoodwink audiences with content they have no way of verifying.”

Research commissioned for the program surveyed 1,000 company executives. Results showed 76% have already implemented AI superintelligence for responsibilities previously handled by human workers. Forty-one percent acknowledged reducing their workforce, while nearly half anticipate additional employment reductions within the next five years.

The stunt mirrored previous controversies surrounding “Tilly Norwood,” an AI-created actress that triggered protests from entertainment industry workers and labor organizations. SAG-AFTRA condemned such digital characters as “computer-generated content untethered from the human experience.”

Momentum builds for regulatory action

From royal endorsements to flawed algorithmic outputs and media demonstrations, a pattern emerges with increasing clarity: artificial intelligence technology races ahead while safety mechanisms lag. Demands for pausing superintelligence research have expanded beyond academic circles. Today’s supporters include creative professionals, elected officials, defense establishment figures, and cultural leaders worldwide.

Society faces intensifying questions about whether slowing development remains possible — or advisable — before AI crosses thresholds that could fundamentally transform economic systems, national security frameworks, and human society itself.

What’s your take on AI superintelligence? As artificial intelligence reshapes our world at breakneck speed, do you support calls for pausing superintelligence development? Please share your views below.