Google has silently introduced a groundbreaking mobile application that transforms smartphones into powerful artificial intelligence workstations. The Google AI Edge Gallery app enables users to operate sophisticated AI models directly on their devices without requiring internet connectivity. This experimental Android application bridges Hugging Face’s extensive AI development ecosystem with Google’s cutting-edge on-device processing technologies. An iOS version will debut shortly.

The innovative app empowers users to discover, install, and execute AI models capable of creating images, providing intelligent responses, developing software code, and performing numerous other tasks. Rather than transmitting sensitive information to remote cloud servers, these machine learning models operate entirely within the smartphone’s processing unit.

This revolutionary approach enhances user privacy, minimizes response delays, and guarantees artificial intelligence functionality regardless of network availability.

Offline AI advantage

Traditional cloud-based artificial intelligence systems typically offer superior computational capabilities compared to local alternatives. However, they demand constant Internet access. Users who prioritize protecting confidential information increasingly favor on-device machine learning inference. This technology also ensures AI availability during network outages or in areas with limited connectivity.

Google acknowledges that “performance outcomes may differ significantly.” Advanced smartphones equipped with robust hardware naturally execute models more efficiently. Model complexity plays an equally important role. Larger neural networks require extended processing time for tasks such as analyzing visual content or generating detailed responses.

App features and interface

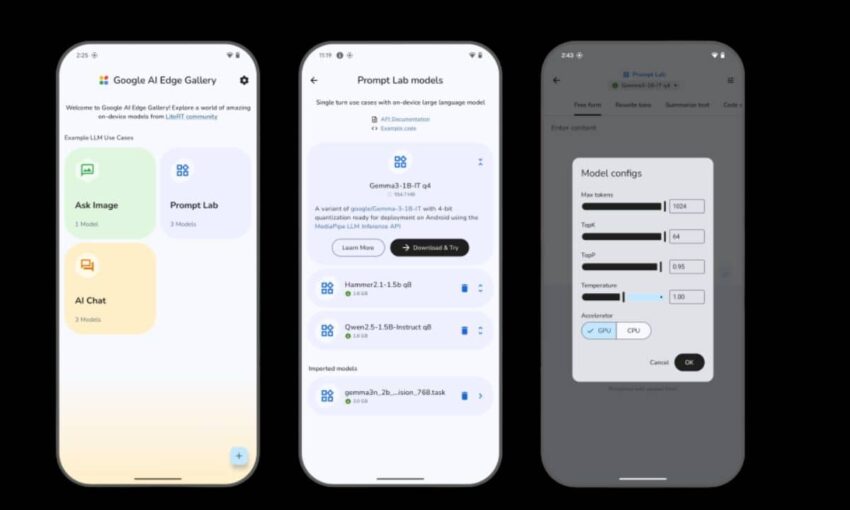

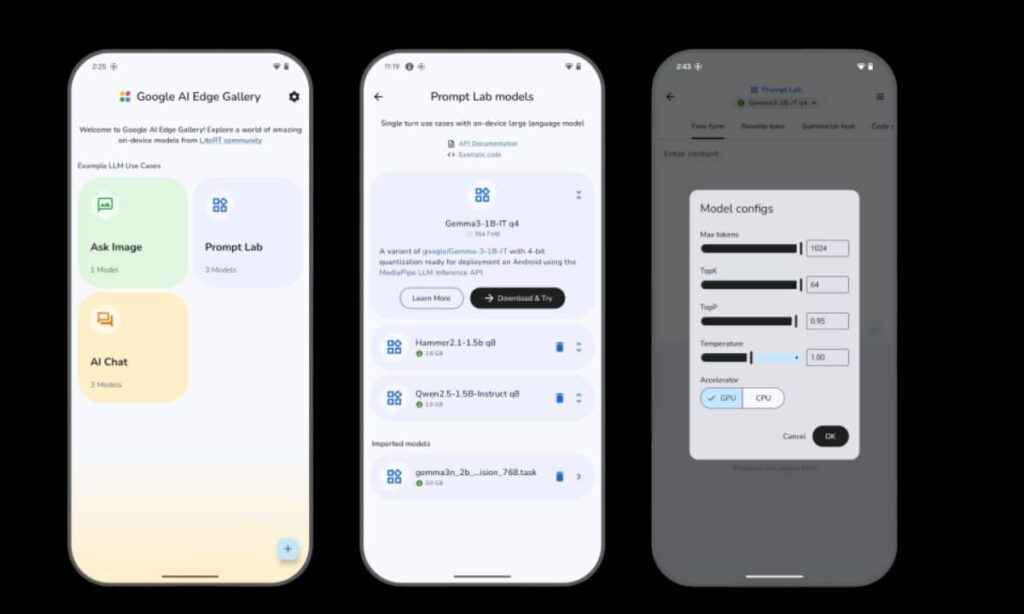

Users can install Google AI Edge Gallery directly from its official GitHub repository. The interface presents intuitive shortcuts for common AI applications, including “Ask Image” and “AI Chat” functions. Selecting any option displays compatible models optimized for specific tasks. Google’s Gemma 3n model ranks among the premier choices for text-generation activities.

Gemma models represent Google DeepMind’s latest innovation in compact AI systems. The Gemma 3 series made its official debut in March 2025. The streamlined 1 billion-parameter version (Gemma 3 1B) targets mobile device optimization. This efficient model handles fundamental generative tasks without requiring cloud computing resources.

The application features a dedicated “Prompt Lab” workspace where users initiate single-session AI operations. Pre-built templates streamline common functions like content summarization and text revision. Customizable settings allow users to adjust model behavior before execution.

Developer involvement

Google AI Edge Gallery operates under an Apache 2.0 open-source license. This framework permits developers to utilize and modify the application for both commercial and personal projects without restrictions. Google actively encourages AI community members to experiment with the platform and contribute valuable feedback regarding user experience and code optimization.

Comprehensive documentation available on GitHub provides detailed installation procedures and system compatibility specifications. The documentation emphasizes that the Android release remains an “experimental Alpha version.” The iOS launch is scheduled for the upcoming weeks.

Privacy and security

On-device artificial intelligence significantly strengthens data protection measures. Instead of routing personal images or sensitive prompts through external data centers, machine learning processing occurs entirely within the user’s device.

This approach attracts individuals concerned about sharing confidential information with cloud-based services. Industries, such as healthcare, legal services, and financial sectors, particularly benefit from offline AI’s reduced exposure risks.

Performance considerations

Smartphones featuring dedicated AI acceleration chips, including Google’s Tensor G3 processor or equivalent hardware from competing manufacturers, demonstrate superior model performance. Older devices lacking specialized AI cores may experience reduced processing speeds. Users should select models matching their hardware specifications to prevent excessive delays.

For instance, a smartphone running the 1 billion-parameter Gemma 3 1B model typically generates text responses within seconds. Attempting to operate a 27 billion-parameter variant could require significantly longer processing times. The application interface clearly warns users about these performance variations.

Model variety

Hugging Face maintains an extensive repository containing thousands of publicly available AI models spanning natural language processing, computer vision, and additional specialized domains. Google AI Edge Gallery integrates seamlessly with this vast ecosystem. Users can explore image-generation networks, text-to-speech systems, question-answering platforms, and code-development tools.

Available models range from several hundred million parameters to multiple billions. Researchers have trained these systems using public datasets for diverse applications, including summarization, translation, classification, and object detection. Users can examine detailed metadata covering licensing terms and training data sources to ensure models meet their specific requirements.

Early feedback

Initial testing phases reveal positive user reception regarding the application’s straightforward design. Beta participants report that model initialization typically completes within seconds on adequately equipped devices. Some users noted that exceptionally large models occasionally fail to load properly or cause system crashes. Google actively solicits community feedback through GitHub issue tracking and developer forums.

Developers can create custom versions of the GitHub repository to experiment with specialized models. Several early adopters have already begun developing niche applications, including offline translation tools designed for field researchers. Google plans to integrate insights from community contributions into future software updates.

Looking ahead

Offline artificial intelligence on mobile platforms represents an emerging technological frontier. Google’s experimental launch aims to accelerate industry innovation. As smartphones incorporate increasingly powerful AI accelerators, developers anticipate that on-device models will handle progressively complex computational tasks. Sectors including journalism, education, and creative industries could benefit from local AI applications that maintain privacy while functioning in areas with unreliable internet access.

The iOS edition of Google AI Edge Gallery should launch within this month. This expansion will extend the application’s accessibility beyond Android users. Future updates may incorporate additional model frameworks beyond Hugging Face, further expanding user options.

Sustainable innovation

By releasing the application as open-source software, Google cultivates collaborative development. Contributors can suggest enhancements, optimize performance for various hardware configurations, and integrate local AI features into independent projects. The Apache 2.0 license facilitates widespread adoption without legal complications.

Google executives view on-device AI as a logical progression from cloud-based services. AI infrastructure leadership emphasizes that privacy protection and processing speed represent crucial elements for artificial intelligence’s next evolutionary phase. Development teams plan to refine the user experience based on community and user feedback.

Have you tried offline AI on your mobile device? What applications do you envision for on-device artificial intelligence? Share your experiences with mobile AI technology in the comments below.