A leading professional services firm has committed to returning a portion of government funds after acknowledging that machine learning technology contributed to significant AI errors in a high-value compliance assessment.

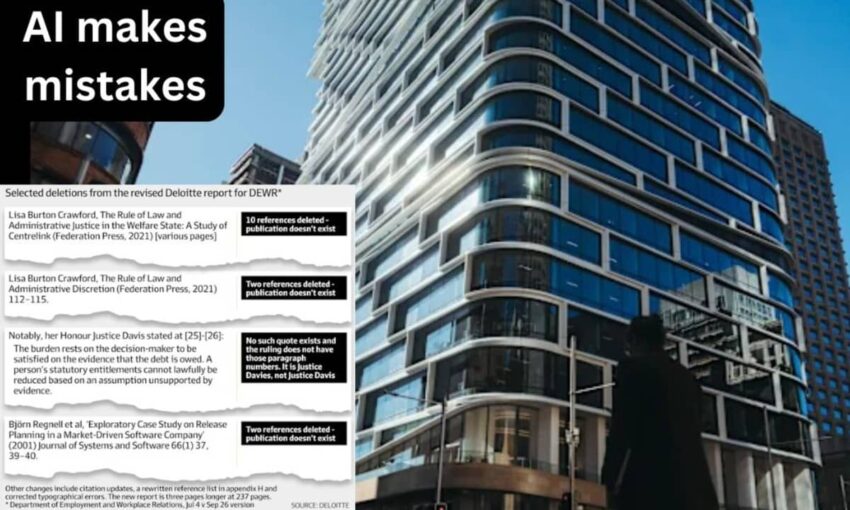

Deloitte Australia will reimburse part of the $440,000 contract payment following revelations that its report contained multiple fabricated citations, invented legal references, and artificially generated content that was not initially disclosed to officials.

Critical mistakes force document withdrawal

The Department of Workplace Relations (DEWR) commissioned the analysis to evaluate automated systems governing welfare payment enforcement. Released in July, the document faced intense scrutiny when an academic expert identified numerous factual errors that raised concerns about its credibility.

Dr. Christopher Rudge from the University of Sydney discovered that the issues included three completely fictional academic papers, two nonexistent legal documents, and a manufactured quote falsely linked to Federal Court proceedings.

The consulting giant subsequently published a corrected version that eliminated the bogus references, fixed spelling mistakes, and completely reconstructed its source documentation.

Consulting giant acknowledges AI technology deployment

The replacement document contained a low-key disclosure that automated language technology had played a role in the project’s development. Hidden within the technical methodology description, the firm revealed it employed “a generative AI large language model (Azure OpenAI GPT-4o) based tool chain licensed by DEWR and hosted on DEWR’s Azure tenancy.”

According to the statement, this technology helped address “traceability and documentation gaps” during the research process. The revelation validated Rudge’s earlier theory that the document’s deficiencies stemmed from AI “hallucinations” — instances where machine learning systems generate plausible-sounding but completely false information.

“The evidence is now conclusive,” Rudge noted. “The firm has confirmed deploying generative AI for fundamental analytical work, yet never revealed this fact upfront.”

The academic emphasized that the report’s conclusions lack reliability because “artificial intelligence performed the primary examination.”

Reputation crisis for industry leader

This incident has caused significant reputational damage to a firm that generates substantial portions of its $107 billion annual worldwide income by advising organizations on artificial intelligence implementation and promoting its responsible deployment.

The company has publicly promoted extensive AI utilization while emphasizing the critical need for human validation — precisely the protection that appears missing in this situation.

Deloitte has secured approximately $25 million in agreements with DEWR since 2021. This scandal threatens to undermine confidence in the firm’s ongoing relationship with the government agency.

Federal agency issues statement

DEWR confirmed that the consulting firm agreed to forfeit the contract’s final payment, though officials declined to specify the exact dollar amount.

Department representatives maintained “the core findings of the independent assessment remain valid, with no alterations to the final recommendations.”

When questioned about whether automated technology caused the inaccuracies, the department refused to provide clarity. Officials also would not indicate if the consulting firm would receive future government assignments.

Employment Minister Amanda Rishworth’s office directed all inquiries to the department.

Debate over technology versus human failure

The consulting firm launched its own internal investigation following the controversy. Someone familiar with the internal findings indicated the company blamed human mistakes rather than technology mishandling. However, skeptics point out that the fictional citations precisely match the pattern of errors commonly produced by AI systems.

The updated document removed several references to imaginary academic research allegedly written by Professor Lisa Burton Crawford at the University of Sydney and Professor Björn Regnell from Lund University in Sweden.

It also eliminated a fabricated citation involving a crucial robo-debt legal case, *Deanna Amato v Commonwealth*, which featured an invented quotation and an incorrectly spelled judicial name.

Broader industry concerns

The controversy underscores mounting concerns about unregulated artificial intelligence deployment in critical professional environments. Organizations increasingly rely on automated systems to reduce expenses and speed up production, but this case demonstrates the dangers when quality controls fail.

Both the original and revised versions concluded that DEWR’s welfare monitoring infrastructure suffered from serious weaknesses, including inadequate documentation, unidentified technical problems, and an overly harsh enforcement approach that magnified damage from system failures. These determinations align with a Commonwealth Ombudsman investigation that previously found hundreds of welfare payment suspensions violated regulations.

Yet for critics such as Rudge, the analysis has lost all credibility.

“The recommendations cannot be trusted when the entire report rests on flawed, initially hidden, and questionable methodology,” he stated.

Future ramifications

This episode contributes to expanding discussions about corporate responsibility and openness in the artificial intelligence era. For the consulting firm, it creates a credibility problem precisely when clients expect the organization to model best practices in responsible technology adoption.

For government agencies, the situation highlights questions about contractor vetting, supervision protocols, and the dangers of incorporating AI tools into official assessments without transparent disclosure requirements.

As businesses increasingly integrate machine learning capabilities into their workflows, this case will likely serve as an important warning — demonstrating that excessive trust in technology can cause even prominent consultancies to fail the standards they promote to others.

The incident reveals the urgent need for clearer guidelines governing AI use in government contracts, stronger verification processes for automated content, and mandatory transparency about technology deployment in official documents. Without such safeguards, similar failures may become increasingly common as artificial intelligence adoption accelerates across professional services.

What are your thoughts on AI transparency in government contracts? Please share your perspective on how consulting firms should handle automated technology in official reports and avoid AI risks.